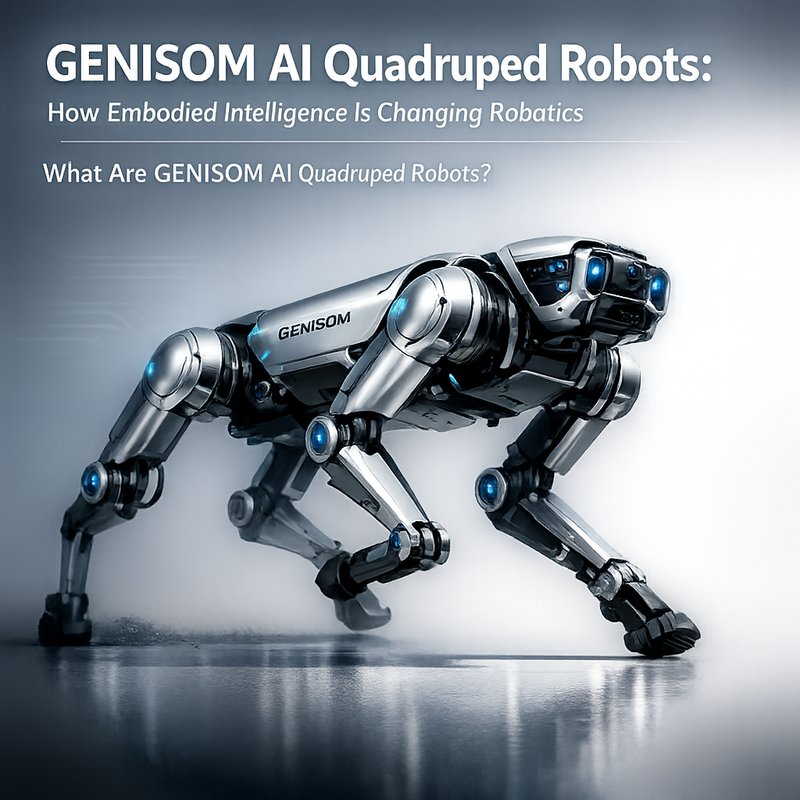

GENISOM AI Quadruped Robots: How Embodied Intelligence Is Changing Robotics

Adolfo Usier2026-06-10T05:34:45+00:00GENISOM AI quadruped robots are a new type of robot that can walk, run, and learn from the world. Their embodied intelligence lets them adapt quickly, making them useful for search and rescue, farming, delivery, and more.